Patients Have AI-Disconnect When it Comes to Their Health Care – Pew Research Center Insights

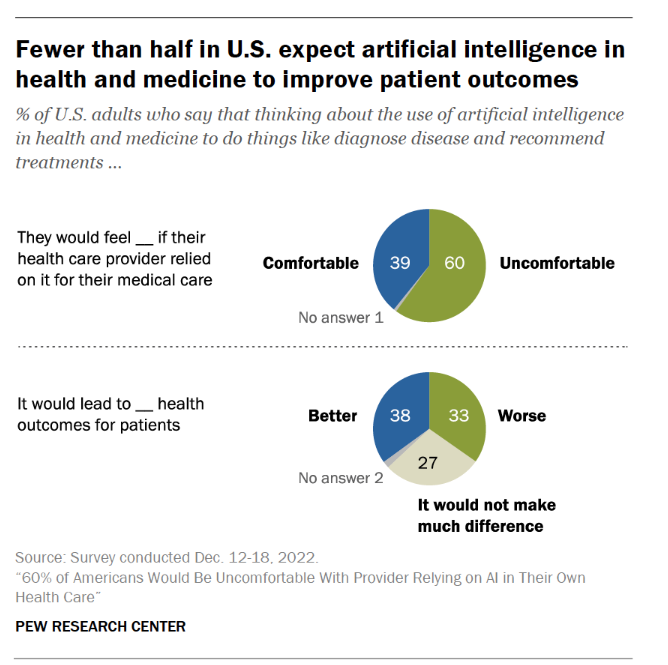

Most U.S. health and fitness citizens imagine AI is getting adopted in American wellness treatment too promptly, feeling “significant discomfort…with the notion of AI becoming applied in their individual wellness treatment,” according to customer reports from the Pew Investigate Center.

The major-line is that 60% of Individuals would be awkward with [their health] provider relying on AI in their personal treatment, found in a consumer poll fielded in December 2022 among about11,000 U.S. grownups.

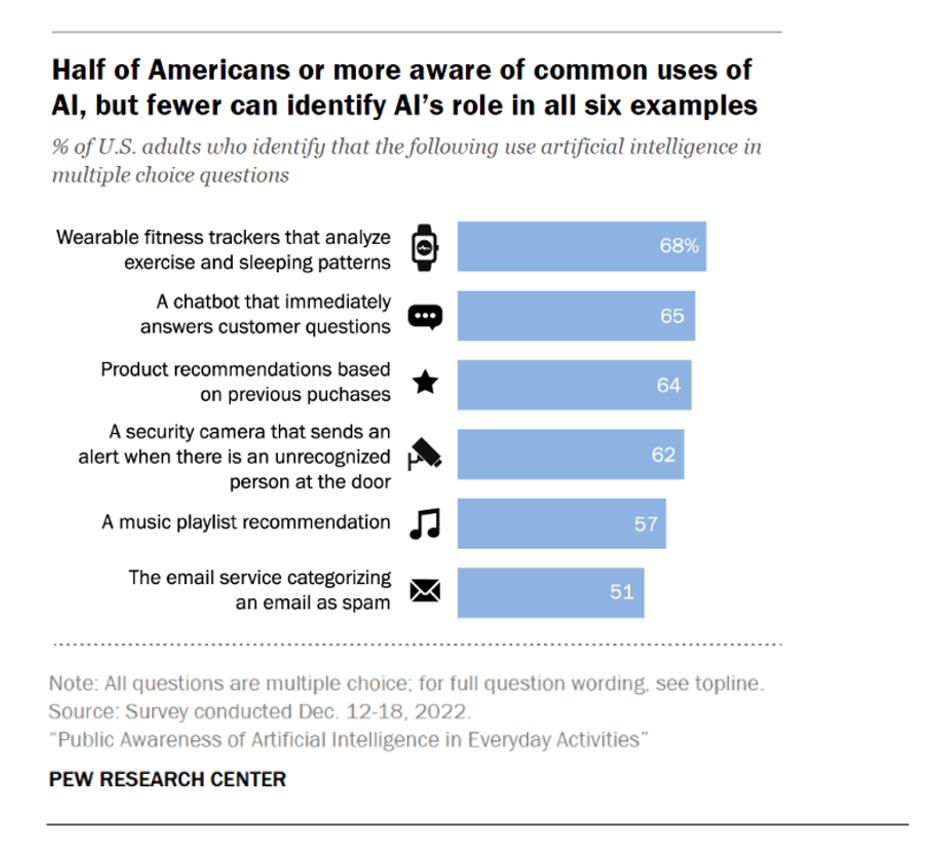

Most buyers who are aware of typical utilizes of AI know about wearable exercise trackers that can examine workout and rest styles, as a major overall health/wellness application for the technology. Other normally-recognized examples of AI in everyday residing included chatbots that respond to consumers’ inquiries, solution suggestions centered on earlier purchases, and security cameras that deliver alerts when they “see” an unrecognized man or woman at the door.

Pew Exploration Middle has revealed various analyses based on the details collected in the December 2022 shopper study. I’ll emphasis on a handful of of the well being/care knowledge details right here, but I advise your reviewing the whole set of stories to get to know how U.S. shoppers are hunting at AI in day by day lifestyle.

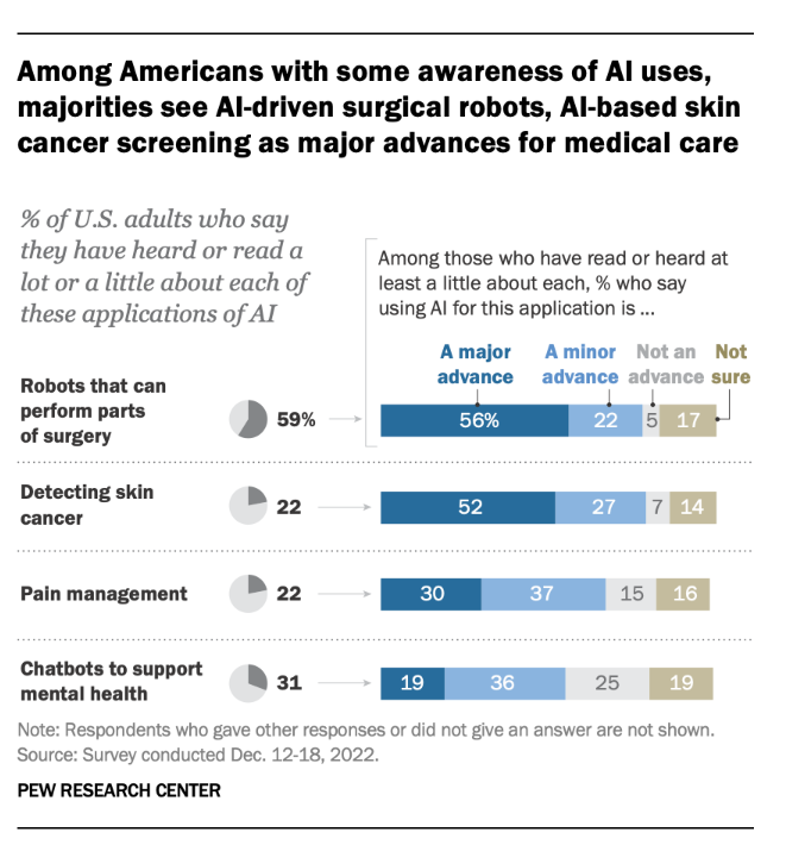

Amongst U.S. individuals who are mindful of AI purposes, over a person-fifty percent check out robots that can complete surgical treatment as properly as AI applications that can detect skin cancer stand for significant innovations in health-related care.

For ache management and chatbots for psychological overall health? Not so substantially significant advancements as minor types which just one-3rd of individuals understand.

For psychological wellness, 46% of people assumed AI chatbots really should only be employed by folks that are also looking at a therapist. This viewpoint was held by about the same proportion of folks who experienced listened to about AI chatbots for psychological overall health as those people who did not know about the use of AI chatbots for mental overall health.

A lot less than fifty percent of men and women assume AI in wellness and medication to make improvements to client outcomes. Notice that just one-third of people assume AI to direct to even worse health outcomes.

Clinical glitches go on to obstacle American health treatment. About 4 in 10 individuals check out the likely for AI to decrease the variety of issues created by well being care companies as well as the prospect for AI to handle health treatment biases and well being equity.

This is an crucial prospect for AI applications to deal with in U.S. healthcare: two in 3 Black older people say bias centered on patients’ race or ethnicity proceeds to be a major problem in wellness and medication, with an extra just one-quarter citing bias as a minimal trouble, uncovered in the Pew study.

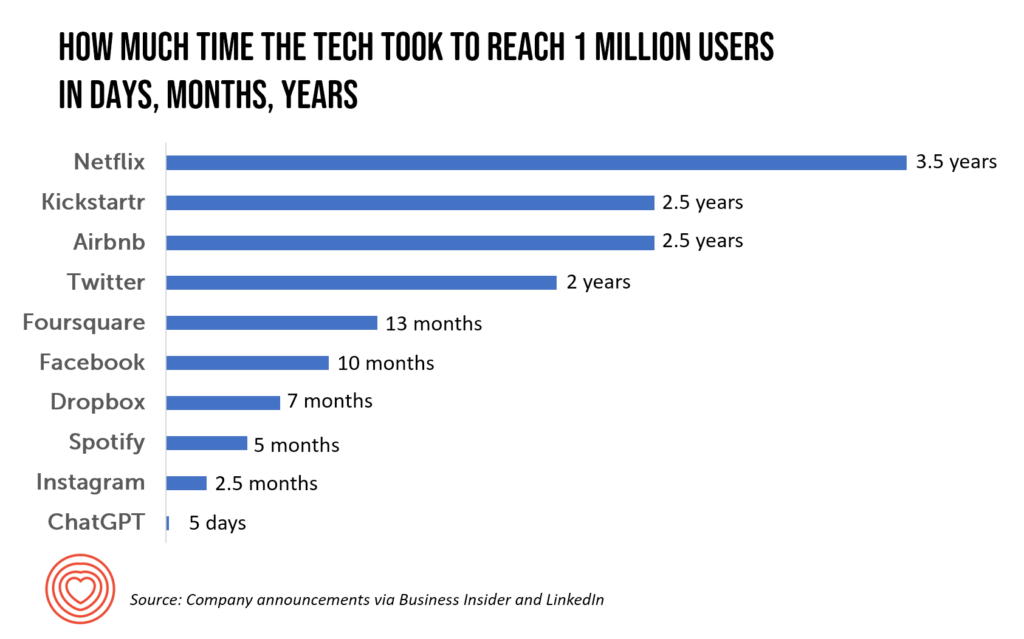

Health Populi’s Scorching Points: As market carries on to shift speedier and speedier toward AI, and currently the hockey-stick S-curve of ChatGPT adoption, mass media are covering the issue to assistance individuals continue to keep up with the technological know-how. United states Now posted a effectively-well balanced and -investigated piece titled “ChatGPT is poised to upend healthcare details. For much better and worse.”

In her protection, Karen Weintraub reminds us: “ChatGPT released its investigation preview on a Monday in December [2022]. By that Wednesday, it reportedly now had 1 million users. In February, the two Microsoft and Google introduced ideas to include things like AI programs very similar to ChatGPT in their research engines.”

Dr. Ateev Mehrotra of Harvard and Beth Israel Deaconess, usually a force of great info and proof-based mostly health care, additional, “The most effective issue we can do for clients and the typical public is (say), ‘hey, this may be a practical resource, it has a large amount of valuable info — but it normally will make a mistake and really don’t act on this information only in your decision-earning method.”

And Dr. John Halamka, now heading up the Mayo Clinic Platform, concluded that, when AI and ChatGPT won’t change them, “doctors who use AI will possibly switch medical professionals who do not use AI.” Dr. Halamka also lately talked over AI and ChatGPT developments in wellness treatment on this AMA Update webcast.

From the patient’s place of perspective, verify out Michael L. Millenson’s column in Forbes speaking about how in cancer, AI can empower individuals and alter their associations with medical professionals and the care procedure.

A latest essay in The Dialogue, co-penned by a healthcare ethicist, explored a vary of ethical difficulties that Chat-GPT’s adoption in health treatment could current. Privateness breaches of patient details, erosion of affected individual trust, how to properly generate proof of the technology’s usefulness, and assuring basic safety in heath care shipping and delivery are amongst the risks the authors simply call out, as perfectly as the probable of more entrenching a electronic divide and health disparities.

Vox published a piece this week arguing for slowing down the adoption of AI. Sigal Samuel asserted, “Pumping the brakes on artificial intelligence could be the best issue we at any time do for humanity.” He argues that, “Progress in artificial intelligence has been relocating so unbelievably quickly recently that the issue is turning into unavoidable: How extensive until finally AI dominates our globe to the point where by we’re answering to it fairly than it answering to us?”

Sigal de-bunks 3 objections AI proponents raise in this bullish, go-go nascent phase of adoption:

- Objection 1: “Technological development is inescapable, and hoping to slow it down is futile”

- Objection 2: “We don’t want to eliminate an AI arms race with China,” and,

- Objection 3: “We require to engage in with superior AI to determine out how to make state-of-the-art AI risk-free.”

Yesterday, Microsoft and Nuance declared their automatic scientific documentation device embedded with GPT-4 and the “conversational” (i.e., chat) design.

Offered the rapid-paced adoption of AI in healthcare treatment, and ChatGPT as a use case, we will all be impacted by this engineering as patients and caregivers, and employees in the wellbeing/care ecosystem. It behooves us all to remain current, stay genuine and clear, and remain open to finding out what works and what doesn’t. And to make sure have faith in between individuals, clinicians and the bigger health and fitness care system, we have to operate with a sense of privateness- and equity-by-layout, respecting peoples’ feeling of values and price, and act with transparency and regard for all.

In hopeful method, Dr. John Halamka concluded his dialogue on the AMA Update with this optimistic look at: “So how about this—if in my generation, we can acquire out 50% of the burden, the up coming generation will have a joy in follow.”

Concluding this dialogue for now, wishing you joy for your get the job done- and existence-flows!